Importing Google Analytics Data into Your Data Warehouse with Pentaho

Many companies that sell applications and games for desktop and mobile users build a data warehouse so they can create dashboards that show information about user patterns in these applications. By including web funnel data from your website into the data warehouse, you can go a step futher and get complete picture of your user acquisition and retention.

Prerequisites

Data Source

Our data source is Google Analytics. To access the data, we'll need access to the profiles via a username and password.

For the purposes of this project, I recommend creating a new Google Analytics account for this project and giving it read-only access to the specific profiles you want to retrieve. Since you'll need to enter this account's password in another piece of software, it's generally a better practice never to use your own accounts.

ETL Software

To get the data into your data warehouse, you will need software that can extract the data, transform it into the necessary format, then load it into your data warehouse. Such software is called Extract, Transform, and Load (ETL) software. For this project, I'll be using Pentaho Data Integration.

The ETL software will need to be able to connect to your data warehouse. You will also need the ability to create new tables and insert/update data into these tables.

Retrieving & Storing Data with Pentaho

Pentaho comes with a free connector that you can use to query data from Google Analytics. It uses the same syntax as the Google Analytics Core Reporting API to define filters.

Before you query Google Analytics, you'll need to grab the following information with the ETL:

- A date range

- The filters for each filter step you want

On top of that, we will hard-code the user authentication information, the dimension (time), and metric (visitors).

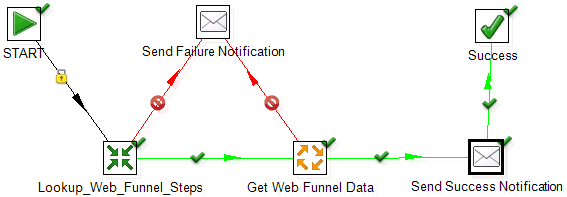

Job: Master

The main thing you should ensure is that the Get Web Funnel Data job is set to execute for every input row on the "Advanced" properties tab. This will cause it to execute for every row that is returned in the previous transformation where we look up the web funnel steps.

Beyond that, this job houses little direct logic. The notification emails are helpful if you schedule your ETL to run daily so you know whether or not it succeeded.

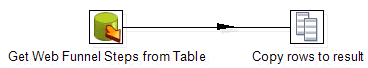

Transformation: Lookup Web Funnel Steps

To make the ETL more dynamic, it is helpful to store information about each funnel step in a database table. This will allow you to query for a new step in the future simply by adding a new row to this table. The table schema should look something like this:

- funnel_step_id

- auto-incrementing sequence (primary key)

- Calling it "funnel_step_id" instead of "id" will make things easier for software that automatically links related tables together

- name

- String representation of the funnel step for reports.

- Example: Landing Page Visitors

- ga_filter

- String representation of the filter that Google Analytics will use to execute the query for this funnel step.

- Example: ga:eventAction==Start Registration

The "Copy Rows to result" output ensures the data is carried over into the next step of the ETL.

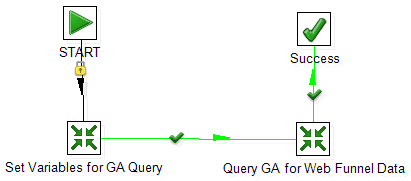

Job: Get Web Funnel Data

Like the master job, this one has little direct logic. It represents the loop that should execute for each funnel step that is returned from your earlier lookup.

Transformation: Set Variables for GA Query

This transformation is responsible for setting up the variables that you will need to feed into the actual query to Google Analytics.

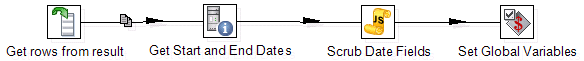

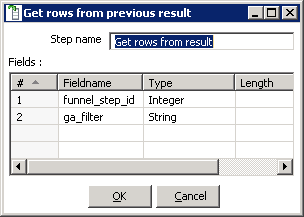

Get rows from result

For this input, you should specify each of the table columns from your lookup table that you'll eventually set as an environment variable:

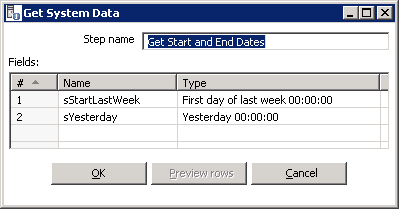

Get Start and End Dates

Because Google Analytics sometimes doesn't update their data for 24 hours, you want to be sure you're fetching data for the past few days so you can update days that might have incomplete data. The easiest way to do that is to use the start of last week for your beginning date and yesterday for your end date. This will mean you're always retrieving between 7-14 days of data.

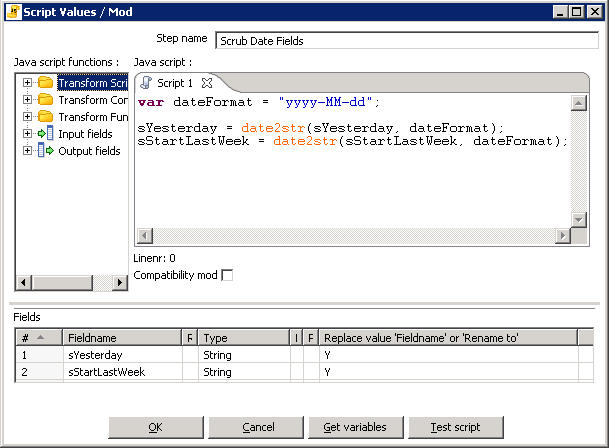

Scrub Date Fields

Pentaho's date format differs from the format you have to use to query Google Analytics. You can use JavaScript to transform the data into the proper format.

var dateFormat = "yyyy-MM-dd"; sYesterday = date2str(sYesterday, dateFormat); sStartLastWeek = date2str(sStartLastWeek, dateFormat);

Afterward, make sure you set up the fields to update with the new values in the table below the JavaScript text area:

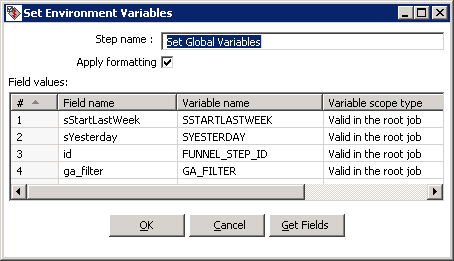

Set Global Variables

Now that you have all the variables you'll need for the query, make sure you set them with a scope of "Valid in the root job" so that other transformations and jobs have access to the them:

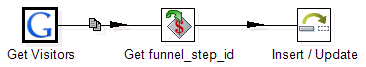

Transformation: Query GA and Store Results

At this point, the ETL has all the data it needs. All that is left is to execute the query and store the results.

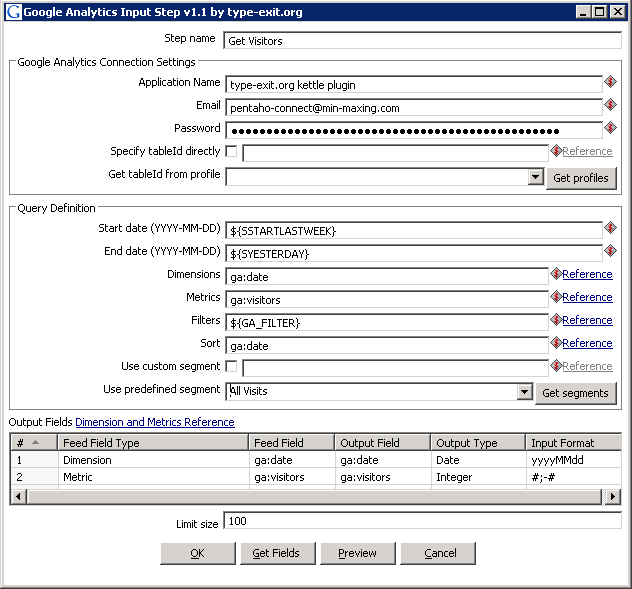

Get Visitors

Enter your user credentials, select the appropriate Google Analytics profile, then enter all of the static and variable information as part of your query definition:

Google Analytics will return several fields that you do not need. To remove them, select them in the "Output Fields" section, right-click on them, and choose "Delete selected lines" from the menu.

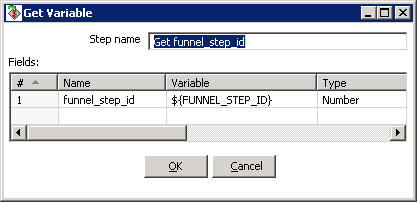

Get funnel_step_id

At this stage, the date and visitor count is part of your result set. You'll need to add the funnel_step_id to the result set so we can link this result to the correct funnel step in your lookup table:

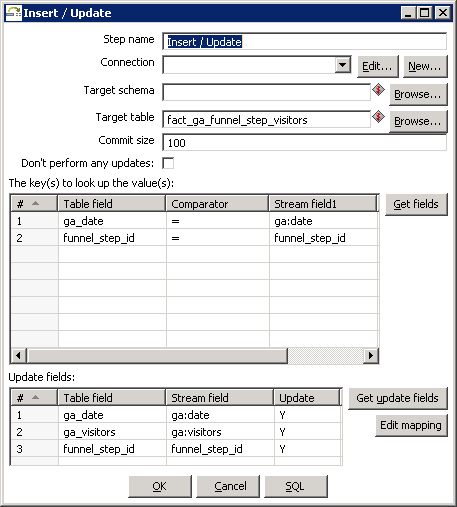

Insert / Update

Now that we have the date, funnel step, and visitor count in our result set, we can insert the data into our data warehouse (or update existing rows):

In the first table, make sure to specify both the date and the funnel_step_id as the keys to use to look up the values. If Pentaho finds an existing record for that date and funnel_step_id, it will simply update it with the new results rather than add a duplicate entry.

Next Steps

In one of my next articles, I will show how you can take the information you have just imported into your data warehouse and create funnel visualizations using QlikView. This will allow you to connect the dots between all of your funnels and allow you to visualize the end-to-end flow of your users.

If you liked this article, you may want to: